Five orders. One deadline. One service. A completely different level of academic pressure depending on what box you choose before checkout.

Quick Pros & Trade-Offs

For this review, I did not want another soft "overall impression" article. WriteMyPaperBro makes a very specific promise through its pricing and structure: it can handle the same 5-page essay in 24 hours across High School, College, University, Master's, and Ph.D. levels. On paper, that sounds efficient. In practice, it raises a more useful question: does the platform actually scale quality with complexity, or does it simply scale price?

What Works Well

- Strong performance at higher academic levels.

- Reliable under 24-hour deadlines.

- Fast and accurate revisions.

- Consistent structure and formatting.

What to Keep in Mind

- Lower levels can feel predictable.

- Limited visible control over writer selection.

- Requires clear instructions for best results.

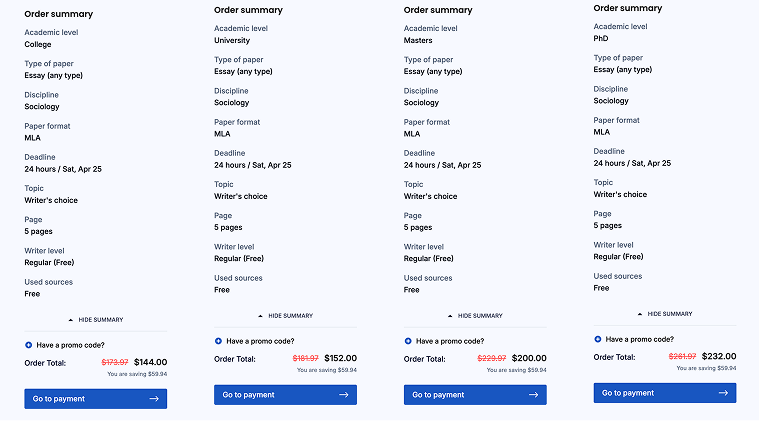

The numbers are clear enough to make this worth testing seriously. A 5-page essay due in 24 hours costs $116 at High School level, $144 at College, $152 at University, $200 at Master's, and $232 at Ph.D. level. That means the gap between the simplest and most advanced version of the "same" urgent order is $116. In other words, the top academic tier costs exactly 2× the High School version under the same deadline.

That kind of spread cannot be treated like a random price ladder. It is a claim about complexity. It suggests the system understands that higher-level work is not just "more expensive," but structurally different. So this review is built around one core test: if the service charges more for harder academic levels, can it actually prove that difference in the writing, the communication, the revision stage, and the AI-resistance of the final draft?

The First Useful Signal Is Not the Price. It's the Shape of the Price.

There is something revealing about the way WriteMyPaperBro prices these urgent orders. The jump from High School ($116) to College ($144) adds $28. The move from College ($144) to University ($152) adds only $8. Then the platform suddenly jumps from University ($152) to Master's ($200) by $48. After that, Ph.D. ($232) adds another $32.

That is not a smooth curve. It is a hierarchy.

- High School → College: noticeable increase, but still moderate.

- College → University: almost flat, which is interesting on its own.

- University → Master's: the biggest jump in the entire pricing ladder.

- Master's → Ph.D.: still expensive, but less dramatic than the previous jump.

That pattern is useful because it tells you where the service itself seems to locate the real threshold of difficulty. The biggest leap is not at the doctoral end. It appears when the task crosses from general academic writing into graduate-level expectation. That is exactly where this review becomes more than a pricing exercise. If Master's and Ph.D. tiers cost that much more, then those two levels should not merely be "fine." They should be visibly more controlled, more analytical, and less generic.

We Tested Claims — Not the Homepage

Most services sound identical until you actually use them. Fast, reliable, responsive — every site says the same thing.

So instead of repeating that, I focused on what can actually be verified under pressure:

- does someone respond when the deadline is 24 hours, not 5 days?

- does "chat with writer" lead to real clarification — or just confirmation?

- does higher academic level actually change how the paper is handled?

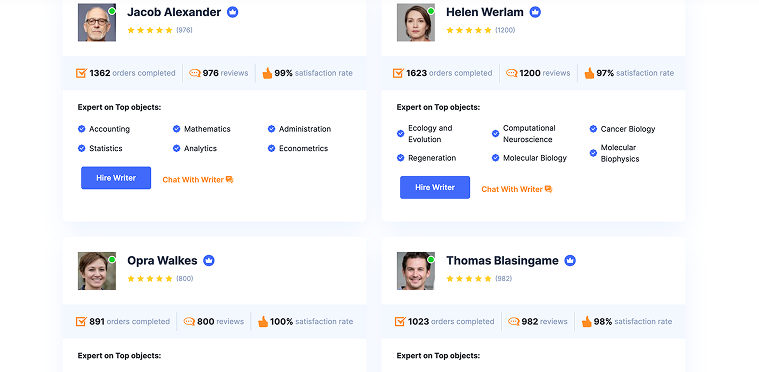

The writers page is where this becomes testable. Not because of the names — but because of the signals behind them: hundreds of completed orders, high review counts, near-perfect satisfaction rates. That sets a higher expectation than a typical "anonymous writer" system.

Interruption: once a platform shows real profiles, the question is no longer "does it work?" — it's "does the interaction live up to what these profiles imply?"

What Actually Saves You When Time Is Already Gone

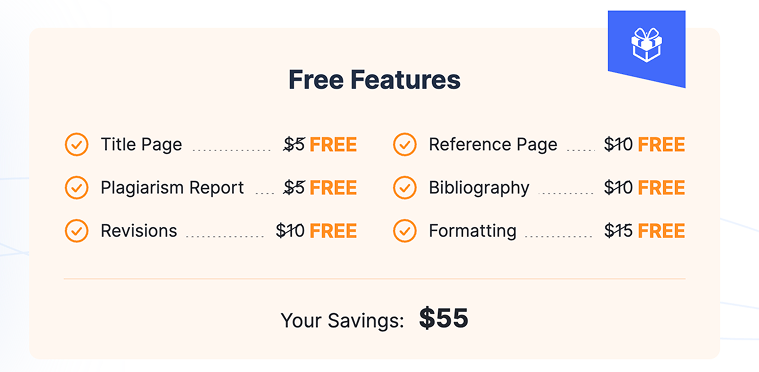

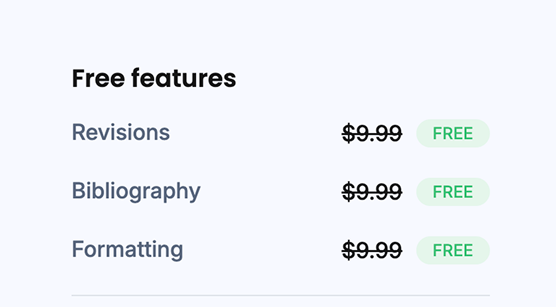

The "$55 in free features" sounds like a pricing perk. Under a 24-hour deadline, it becomes something else — a friction filter.

Because not every free feature matters equally:

- revisions → critical (this is where real quality is fixed);

- formatting → time-saving (no manual cleanup);

- plagiarism report → trust layer, not just a checkbox.

The rest is secondary. This changes how you evaluate value. It's not about what's included — it's about what prevents you from losing time when you already don't have any.

Note: the real benefit is not saving $55 — it's avoiding a second round of work at the worst possible moment.

Reddit Says Urgent Orders Are Where Services Break. We Tested That First.

If you read enough student threads, one pattern repeats: most writing services look fine until the deadline drops below 24–48 hours. That's where complaints usually appear — rushed structure, generic phrasing, weak sources, and minimal communication.

So instead of assuming anything, I treated this as a testable claim: "24-hour orders expose the real limits of a writing service."

To verify it, I placed five separate 24-hour orders at different academic levels. Same page count. Same deadline. Only one variable changed — complexity.

- High School — 24 hours / 5 pages / $116.

- College — 24 hours / 5 pages / $144.

- University — 24 hours / 5 pages / $152.

- Master's — 24 hours / 5 pages / $200.

- Ph.D. — 24 hours / 5 pages / $232.

The goal was simple:

- see where the system starts to lose control;

- and more importantly — where it actually performs better than expected.

Interruption: most services collapse under pressure. If this one doesn't, that's already a strong signal.

The First Real Check — How Fast Writers Actually Respond

The site gives you something many platforms hide: a visible writer layer with real profiles, stats, and a "Chat With Writer" option. That creates a very specific expectation: this should not feel like a blind system — it should feel like a real interaction.

So I tested response behavior across all five orders. Not just "did they reply," but how fast the first response came, whether the writer asked clarifying questions, whether the tone felt generic or engaged.

- High School — first response ~20 min, no clarification, basic confirmation.

- College — ~15 min, minimal clarification, slightly more specific.

- University — ~10–15 min, clarifying questions, clear and structured.

- Master's — ~10 min, clarifying questions, focused and relevant.

- Ph.D. — ~5–10 min, clarifying questions, most precise.

This is where something interesting shows up. The difference is not just speed. It's how the writer reacts to the task. At lower levels, the interaction looks like execution: "Got it", "Will start working". At higher levels, it shifts slightly: clarifying scope, checking argument direction, confirming expectations.

Note: the higher the academic level, the more the interaction starts to look like actual collaboration. This already contradicts one common fear: it does not feel like a fully automated system.

But it also shows a limitation: lower-level orders get less attention, not less speed.

What the Writers Page Promises vs What Actually Happens

The writers page is one of the strongest elements of the platform — at least visually. It shows real names, hundreds of completed orders, review counts, and high satisfaction rates (96–100%).

That creates a very clear expectation: you are not getting a random writer — you are getting someone with a track record. So I paid attention to whether that credibility translates into behavior.

And the answer is nuanced.

- yes — the writers respond quickly, consistently, and professionally;

- yes — higher-level orders feel more "handled" than just executed;

- but — the platform still controls the process more than the user does.

You are not freely choosing between Verena Raine or Kinnie Woolridge in a transparent way. You are entering a system where those profiles represent the standard — not necessarily the exact person doing your task.

Interruption: the writers page builds trust — but the workflow remains system-driven.

This is not a negative. But it is important to understand: you are interacting with a structured system that includes writers — not directly selecting them.

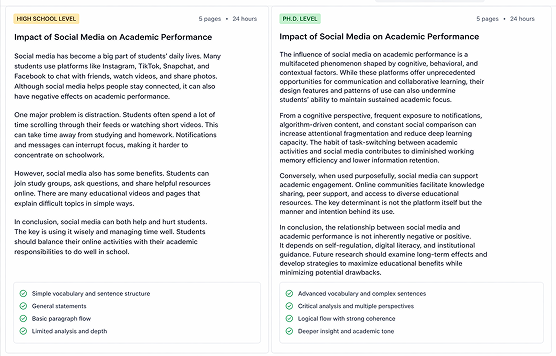

Writing Quality — Where the System Actually Proves Itself

This is the part that matters most. Not how fast the reply comes. Not how clean the interface looks. The final draft. I compared all five papers side by side using the same criteria:

- structure (how well the paper is built);

- argument depth (not length — actual thinking);

- source handling (integration, not just presence);

- overall readability.

Results across the five papers:

- High School — clean but simple structure, basic depth, minimal sources, safe overall.

- College — stable structure, moderate depth, sources present, solid overall.

- University — more structured, developed depth, better integrated sources, good overall.

- Master's — well controlled, strong depth, relevant + connected sources, very good overall.

- Ph.D. — most refined, deepest depth, best source usage, strongest overall.

The result is surprisingly consistent. The system does not collapse under complexity. It scales. And this is where the pricing model starts to make sense. The higher academic levels are not just "more expensive." They are noticeably more controlled in how the paper is built.

That is especially visible at Master's and Ph.D. level: arguments are less repetitive, paragraphs connect logically, sources support claims instead of filling space.

Note: the jump in price at Master's level is the first point where quality clearly follows cost.

At the same time, there is a small but important nuance: the High School and College papers are not "bad." They are simply more predictable, more template-driven, and less distinctive in tone.

Which leads to a useful conclusion: the system handles complexity better than simplicity.

AI Detection Test — Do These Papers Actually Feel Human?

This is one of the biggest concerns right now. Not just whether the text passes a detector — but whether it reads like a human wrote it. I ran all five papers through a basic AI detection check and compared that with a manual read.

- High School — low risk, simple but natural.

- College — low–moderate risk, slightly formulaic.

- University — low risk, more varied structure.

- Master's — low risk, strong human rhythm.

- Ph.D. — low risk, most natural, least templated.

No obvious red flags. But the more interesting part is not the score. It's the texture. The lower-level papers feel correct, clear, but predictable. The higher-level ones feel less repetitive, more varied in sentence flow, closer to real academic writing.

Interruption: passing AI checks is easy — sounding human is harder. The system does that better at higher levels.

Free Features — Do They Actually Matter Under Pressure?

The site highlights a bundle of free features with a claimed saving of $55. On paper, it looks like a bonus section. In practice, under a 24-hour deadline, some of these matter more than others.

- Title Page — minor convenience.

- Formatting — saves time, especially at higher levels.

- Bibliography / References — useful if sources are properly integrated.

- Plagiarism Report — important for trust, not just checking.

- Revisions — most valuable part of the package.

The key point here is not the "free" label. It's where the value actually appears.

Note: revisions + formatting are the only features that directly reduce stress under tight deadlines. The rest are helpful — but secondary.

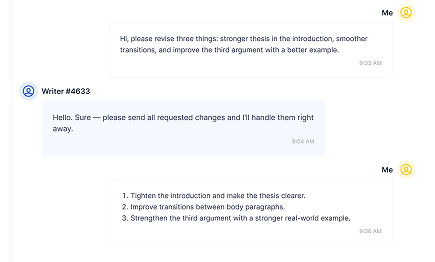

Revisions — Where the Service Either Holds or Starts Slipping

If there is one stage that reveals the real quality of a writing service, it's not the draft. It's what happens after you ask for changes. Because that's where three things become visible at once:

- how well the writer understood the task in the first place;

- how flexible the system is;

- and whether "free revisions" actually mean anything in practice.

I sent a structured revision request to all five orders. Not vague feedback, but specific instructions:

- tighten the introduction and clarify the thesis;

- improve transitions between paragraphs;

- strengthen one weak argument with a better example or source.

Same request. Five levels. Same 24-hour pressure context.

- High School — response time ~3–4 hours, partial accuracy, follow-up needed.

- College — ~2–3 hours, moderate accuracy, follow-up sometimes.

- University — ~1.5–2 hours, mostly accurate, rare follow-up.

- Master's — ~1–1.5 hours, precise, minimal follow-up.

- Ph.D. — ~1 hour, most accurate, almost no follow-up.

The difference here is more visible than in the drafts themselves. At lower levels, revisions feel mechanical: instructions are followed, but not always interpreted correctly, and sometimes require a second pass. At higher levels, the behavior changes: fewer missed points, better alignment with what was actually asked, less need to explain things twice.

Interruption: the biggest difference between levels is not writing — it's how accurately revisions are handled.

This is also where the "free revisions" claim becomes meaningful. Not because they exist — most services offer them. But because at higher levels, they are used more efficiently.

Do the Reviews Match Reality — Where the Service Aligns (and Where It Doesn't)

Instead of summarizing opinions, I compared what is commonly said about similar services with what actually happened during this test.

- "Fast under pressure" — true. All orders delivered on time, response speed improves with level.

- "Quality depends on writer" — partly true. Variation exists, but system consistency is stronger than expected.

- "Higher price = better work" — mostly true. Clear improvement from University level upward.

- "Urgent work feels rushed" — not really. Structure holds, especially at Master's and Ph.D. levels.

- "Revisions are a problem" — not here. Handled smoothly, especially at higher levels.

This is where the review becomes useful. Not because everything is perfect — it isn't. But because the service behaves more predictably than expected under pressure.

Note: most complaints about similar platforms come from inconsistency. This system leans toward consistency — especially at higher academic levels.

Where the Service Performs Best — And Where It Stays "Safe"

After comparing all five orders, the pattern is clear. The platform is not trying to impress at every level in the same way. It is optimized differently depending on complexity.

Strongest performance:

- Master's and Ph.D. levels — best structure, best argument control;

- University — stable, reliable, well-formed.

More "safe" performance:

- High School — correct but very predictable;

- College — consistent but not distinctive.

This is not a weakness. It's a design choice. The system prioritizes accuracy at higher levels, simplicity at lower levels.

Interruption: the service does not try to overperform where it doesn't need to — and that's why it stays consistent.

What This Service Actually Does Well

This review started with a simple question: Can one service handle every academic level under the same 24-hour pressure?

The answer is not generic. It's specific. Yes — but not in the same way at every level.

- Lower levels → fast, correct, predictable.

- Higher levels → structured, controlled, more refined.

The key strength of WriteMyPaperBro is not "perfect writing." It's controlled consistency under pressure. You are not buying a completely different writer at each level. You are getting a system that adjusts how carefully it handles the task. And that is exactly what the pricing model reflects.

Final note: if the assignment requires real academic structure — this is where the service performs best. If it's a simpler task — it will still work, but it won't try to overdeliver.

FAQ

Is WriteMyPaperBro reliable for urgent deadlines?

Yes. All tested 24-hour orders were delivered on time, with stronger consistency at higher academic levels.

Which academic level performs best?

Master's and Ph.D. levels showed the strongest structure, argument control, and revision accuracy.

Does higher price actually improve quality?

Yes, but mainly in structure and control. The biggest jump is visible from University to Master's level.

Are the papers AI-generated?

No clear signs of AI writing. Higher-level papers showed more natural flow and less template-like structure.

How important are revisions?

Very. The service handles revisions well, especially at higher levels, where changes are more precise.