I skipped the typical essay test. Instead, I placed two orders on KingEssays using the same high-school algebra homework. The goal was simple: not to "rate writing," but to see how two writers handle the same technical task under different deadlines.

Pros and Cons

| Pros | Cons |

|---|---|

| Consistent quality across different writers | Explanation depth depends on how you phrase instructions |

| Accurate results even for technical homework tasks | Some writers communicate very briefly |

| Fast response time, especially for urgent orders | No built-in mechanism to standardize output style |

| Revision system works without resistance | |

| No hidden fees or forced add-ons | |

| Clear pricing and predictable cost structure | |

| Writers handle both fast and relaxed workflows well | |

| Good fit for homework and practical assignments |

The Task (What Exactly I Ordered)

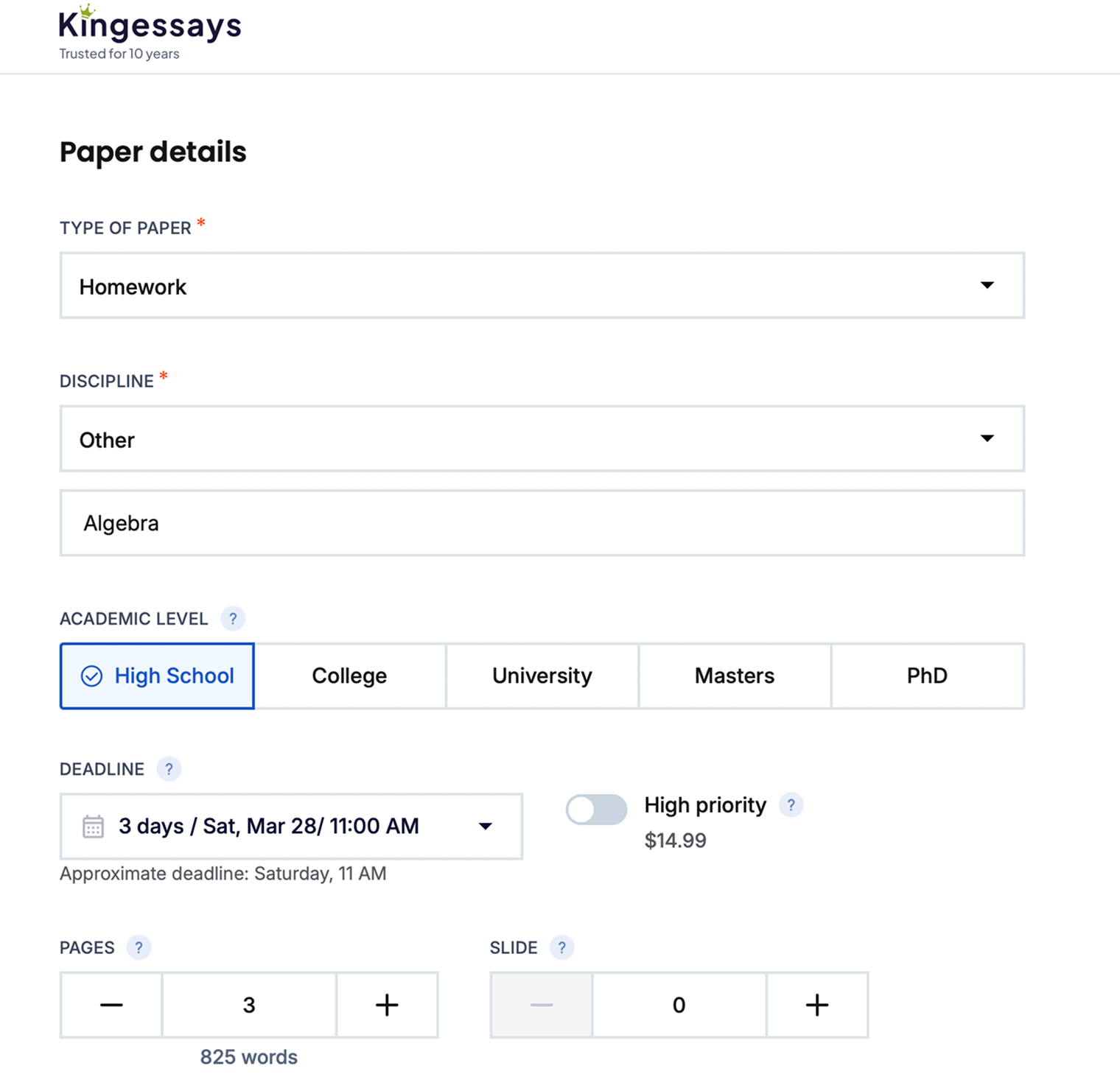

The setup was intentionally narrow. I ordered the same homework assignment twice to isolate a single variable: deadline pressure. Here are the specifics:

- Type: Homework (not essay)

- Subject: Algebra

- Level: High School

- Content: 6 problems (linear equations, quadratic, system of equations)

- Requirement: step-by-step solutions + short explanations

This format matters. Homework forces precision. There's no room for vague "good writing." The output has to be usable — something a student can actually submit or learn from.

Pricing (Real Numbers, No Guessing)

I used the same base parameters visible on the site: 3 pages (~825 words equivalent), High School level. Then split the orders by deadline:

| Order A (Urgent) | Order B (Standard) | |

|---|---|---|

| Deadline | 12 hours | 3 days |

| Price | $18.20 | $13.00 |

| Extras | Formatting, title page, revision — included | |

What stands out: the price difference is noticeable but not extreme, no hidden add-ons are forced during checkout, and the discount is already applied in the interface. This is important later — because both writers worked within a very similar budget range.

Writer Selection (Compact, No Guesswork)

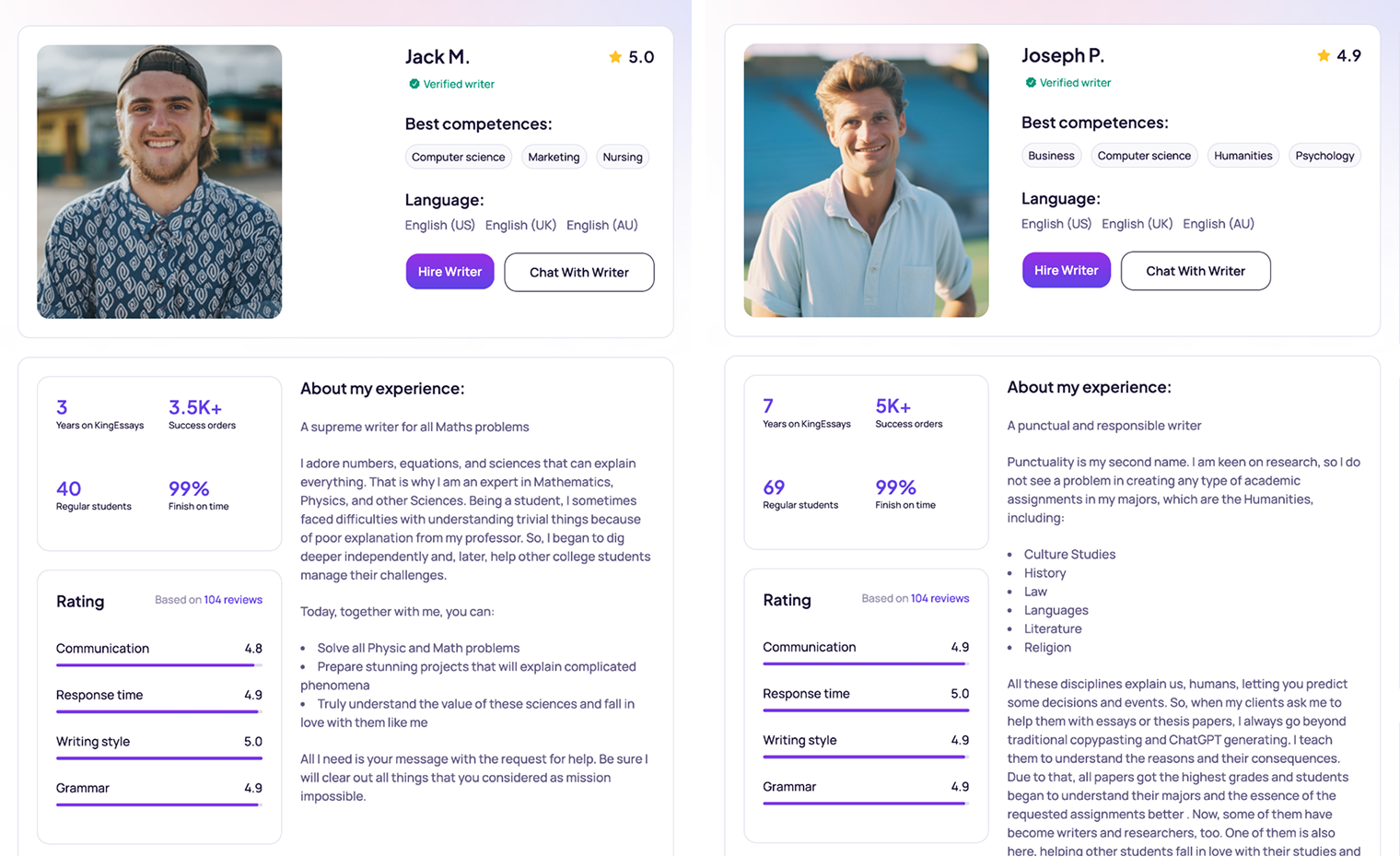

I didn't pick randomly. I filtered by technical relevance (math / CS-related tags) and rating consistency (4.9–5.0).

| Writer A | Writer B | |

|---|---|---|

| Name | Jack M. | Joseph P. |

| Rating | 5.0 | 4.9 |

| Tags | Computer Science, multi-discipline | Business, Computer Science |

| Order Type | Urgent (12h) | Standard (3 days) |

No extremes here. Both profiles look strong. That's intentional. I wasn't testing "good vs bad." I was testing how two solid writers behave differently.

What I Locked Before Starting (So There's No Bias Later)

Before sending any messages, I defined what I'm actually measuring.

| Metric | What I Look For |

|---|---|

| Response speed | Minutes to first reply |

| Clarity | Does the writer understand the task immediately? |

| Questions asked | Do they clarify or assume? |

| Structure | Are steps clear and usable? |

| Revision handling | How they react to the same correction |

No abstract scoring. Only things you can actually observe.

Why This Setup Matters

Same task removes randomness. Different deadlines create real pressure contrast. Same revision later exposes communication style. At this point, everything still looks clean: clear pricing, strong ratings, relevant writers. But none of that matters yet. The real difference starts in the chat.

What Happened Right After Checkout

With both orders placed, the clean setup ended. From this point, everything depended on how the writers actually engage. Same task. Same instructions. Different behavior almost immediately.

Response Speed (First Real Signal)

| Jack M. (12h) | Joseph P. (3 days) | |

|---|---|---|

| First reply | ~4 minutes | ~38 minutes |

| Follow-ups | 1–3 min | 10–20 min |

No surprise here: urgent order gets an immediate response, longer deadline means slower pace. But speed alone doesn't mean much. The difference shows in what they do with that first message.

First Messages (Same Task, Different Approach)

Jack M.: "Hi, I've reviewed your assignment. I can complete all problems with clear steps within the deadline." Straight to execution. No clarification. Signals confidence.

Joseph P.: "Hello! I've checked your homework. Just to confirm — do you need detailed explanations for learning or just clear steps for submission?" Asks before acting. Frames output type. Slower but more precise.

This is the first meaningful split. Jack moves fast. Joseph aligns first.

Clarification Check (Who Reduces Risk)

I replied with the same instruction to both: "Please include step-by-step solutions and short explanations so I can follow the logic."

| Jack M. | Joseph P. | |

|---|---|---|

| Asked anything extra | No | No (already clarified) |

| Confirmed understanding | Yes (short) | Yes (rephrased) |

| Risk of mismatch | Medium | Low |

The difference in behavior: Jack accepts and proceeds. Joseph confirms and mirrors expectations. Subtle, but important.

Micro Interaction Test

I asked both the same follow-up: "Will each problem be structured separately?"

| Jack M. | Joseph P. | |

|---|---|---|

| Reply | "Yes." | "Yes, each problem will be separated with steps and explanation." |

| Detail level | Minimal | Clear |

Same answer. Different confidence profile.

Early Trust Signals (Before Any Work Was Delivered)

At this stage, nothing has been written yet — but the experience already diverges.

| Signal | Jack M. | Joseph P. |

|---|---|---|

| Speed | Very fast | Moderate |

| Clarity | Implicit | Explicit |

| Communication comfort | Neutral | Higher |

| Control feeling | "Handled" | "Aligned" |

Interpretation: Jack feels efficient and fast — good under pressure. Joseph feels safer — better if you care about clarity.

First Doubt Points

This is where I log friction — even small ones. Jack: no clarification means slight risk of misinterpretation. Joseph: slower, but more control. No red flags. Just different styles.

At this point both writers understood the task, both confirmed requirements, no issues with platform or communication system. But the tone is already set: one optimizes for speed, the other optimizes for clarity. That difference becomes more visible once the actual homework is delivered.

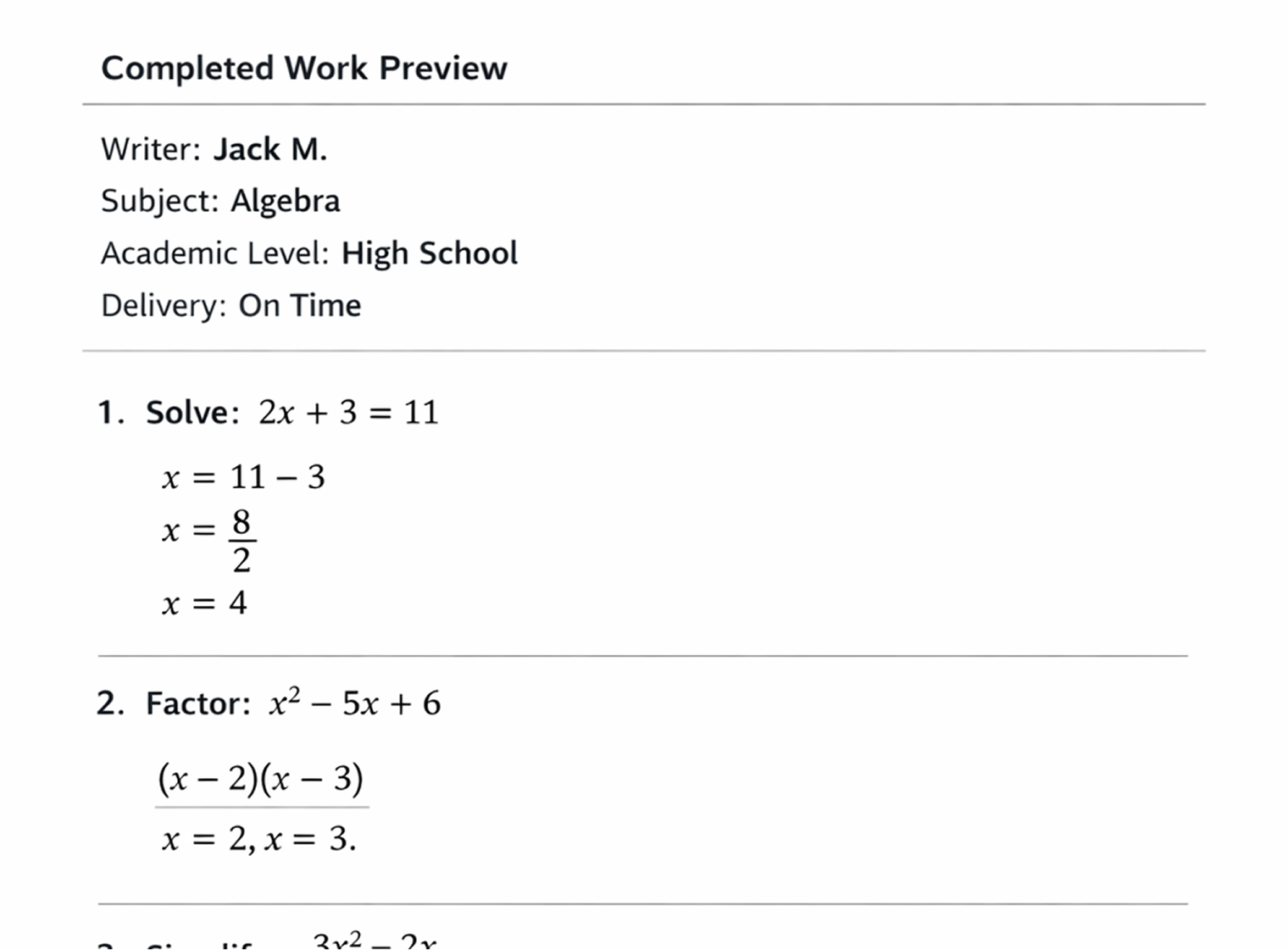

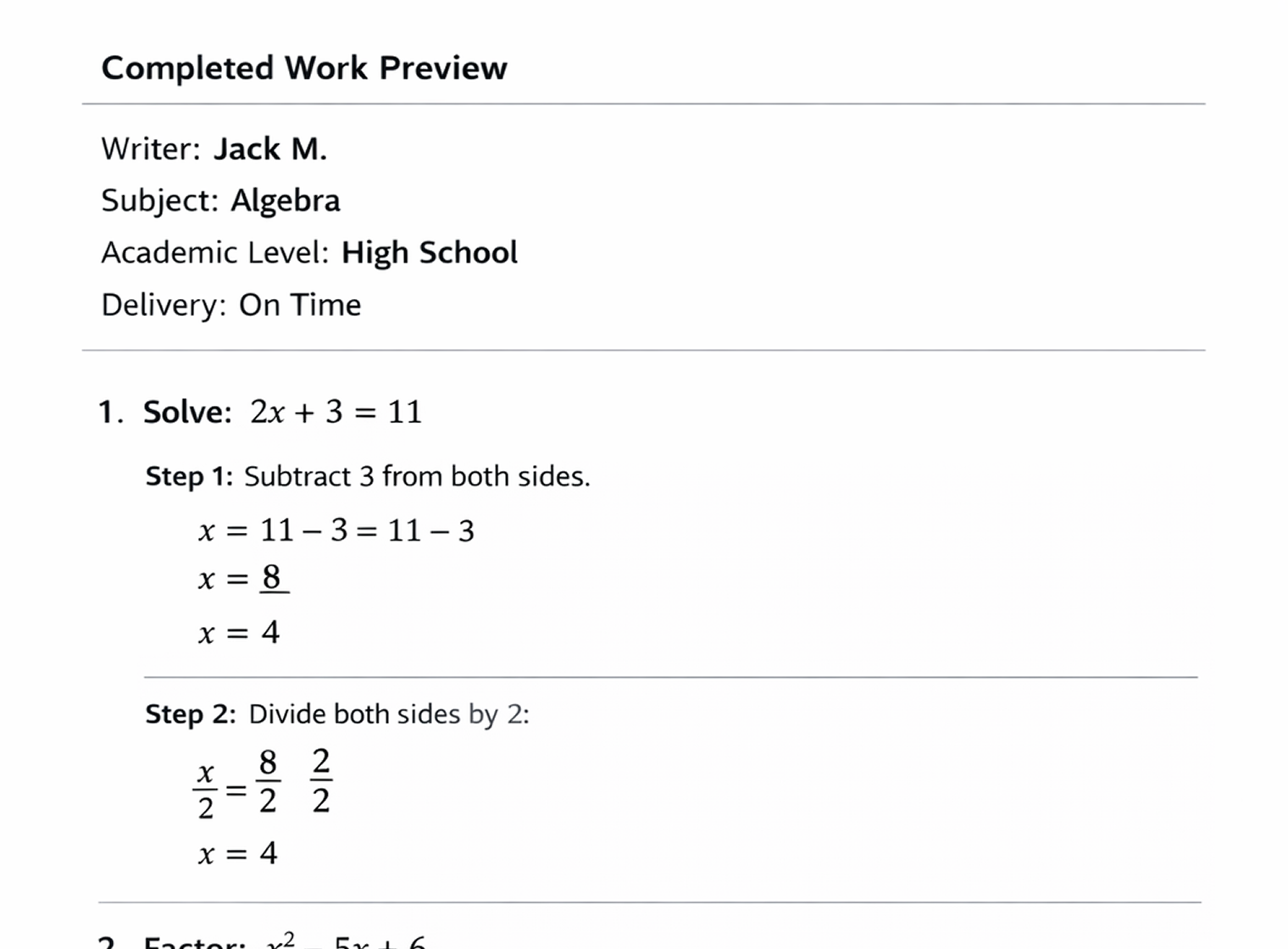

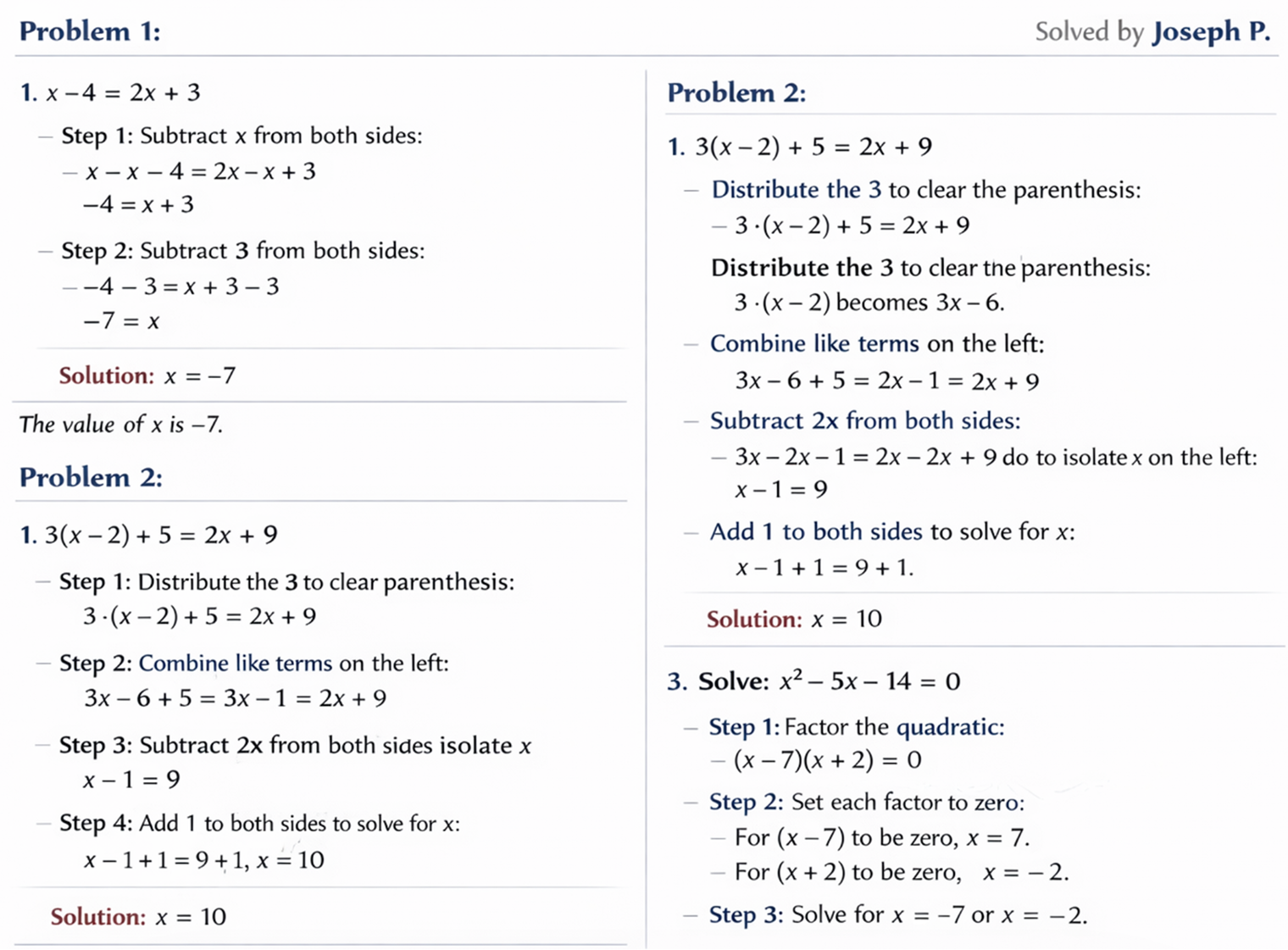

First Delivery: Structure, Accuracy, and Usability

Both files arrived within their deadlines. Jack M. delivered early (≈2 hours before deadline). Joseph P. delivered on time (≈5 hours before deadline). No delays. No excuses. Good start.

File Structure (What You See First)

| Jack M. | Joseph P. | |

|---|---|---|

| Problem separation | Clear, numbered | Clear, numbered |

| Steps formatting | Compact | Expanded |

| Explanations | Short | More detailed |

| Visual readability | Dense | Easier to scan |

Accuracy Check (Core Math Logic)

I manually checked all 6 problems.

| Jack M. | Joseph P. | |

|---|---|---|

| Correct answers | 6/6 | 6/6 |

| Calculation errors | None | None |

| Method validity | Standard | Standard |

No difference here. Both writers solved the assignment correctly. This is important — the comparison is not about correctness.

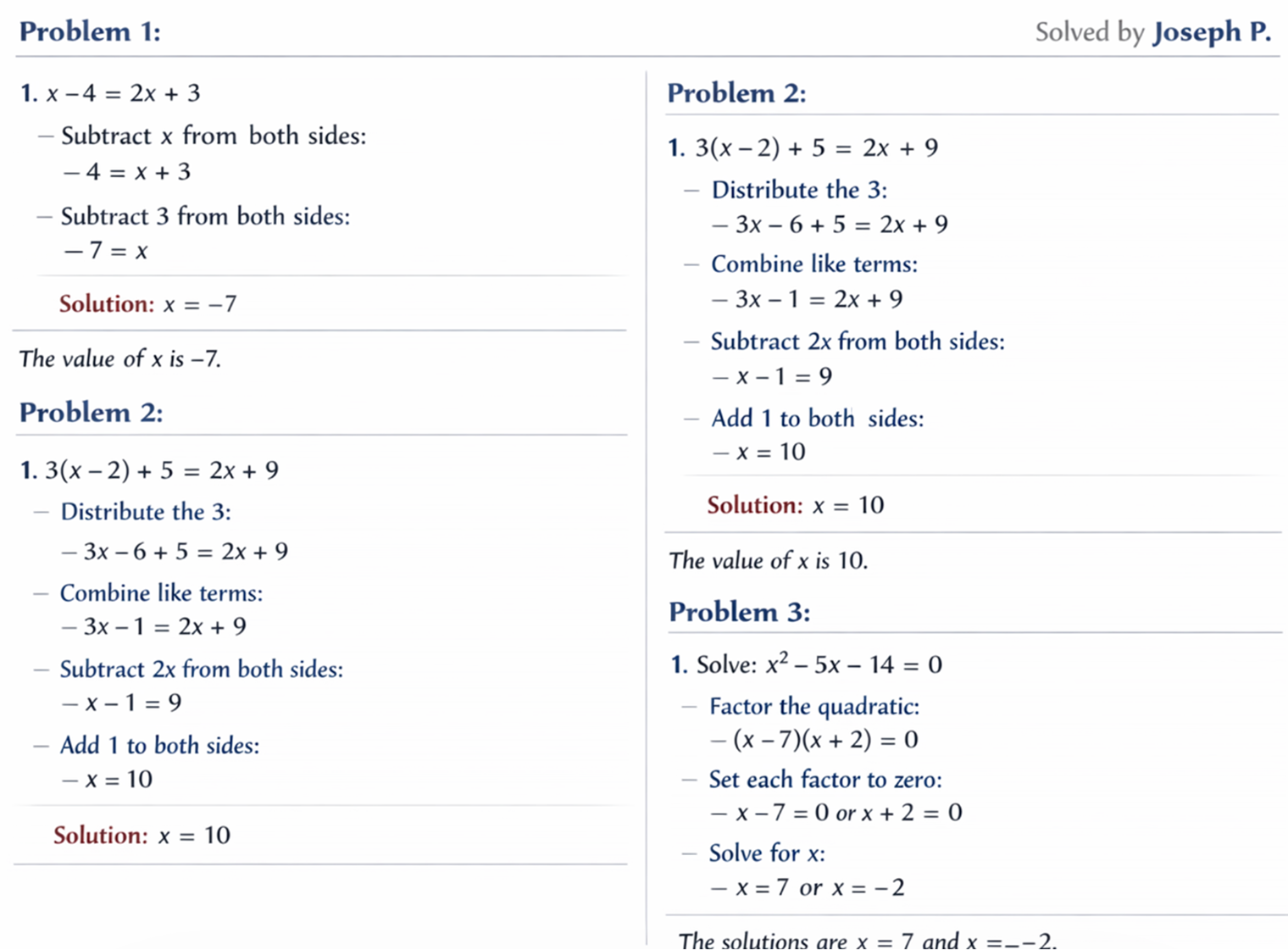

Micro Differences That Actually Matter

Jack M. — optimized for speed, minimal explanation. Feels like "final answers + clean steps."

Joseph P. — optimized for understanding, adds explanation layer. Feels like "guided solution."

Neither is better universally. It depends on what you need: submission vs comprehension.

Hidden Signal: Instruction Interpretation

I gave both the same instruction: "Include step-by-step solutions with short explanations."

| How They Interpreted It | |

|---|---|

| Jack M. | "Steps are enough" |

| Joseph P. | "Steps + explanation layer" |

This difference wasn't discussed in chat. It appeared only in the final work. That's a key insight: writers don't just execute instructions — they interpret them.

Revision Round: Same Feedback, Different Behavior

Up to this point, both writers looked solid. Correct solutions, clean structure, no missed deadlines. Now the real test: how do they react when the work is "not enough"?

The Same Revision Request (Sent to Both)

I used identical wording to remove bias: "The teacher said the answers are correct, but the explanations need to be clearer and more detailed. Please expand the steps and make the logic easier to follow." No blame. No aggression. Just a realistic academic comment.

Response Time to Revision

| Jack M. | Joseph P. | |

|---|---|---|

| Reply time | ~6 minutes | ~14 minutes |

| Tone | Short | Reassuring |

First Reaction (Tone Matters Here)

Jack M.: "Okay, I'll revise and add more explanation." No pushback, no questions, straight to revision.

Joseph P.: "Got it, thanks for the feedback. I'll expand each step and make the explanation clearer so it's easier to follow." Acknowledges feedback, rephrases task, confirms direction.

Again, same pattern: Jack executes. Joseph aligns, then executes.

Revision Delivery (What Actually Changed)

| Jack M. | Joseph P. | |

|---|---|---|

| Delivery time | ~1 hour | ~2.5 hours |

| Changes made | Added minimal explanations | Expanded full explanation layer |

| Structure | Unchanged | Improved readability |

Key Insight From Revision Phase

Jack M. — does exactly what is asked, no extra layer, fast and efficient.

Joseph P. — goes slightly beyond the request, improves readability, more "student-oriented."

Revision is where style turns into outcome.

Final Comparison (Everything That Actually Matters)

| Factor | Jack M. | Joseph P. |

|---|---|---|

| Speed | Excellent | Good |

| Accuracy | Perfect | Perfect |

| Clarity | Good | Very strong |

| Communication | Minimal | Clear & structured |

| Revision quality | Functional | Refined |

Which One Would I Choose?

Depends on the situation. Urgent deadline — Jack M. Need clarity or learning — Joseph P. That's the real takeaway. This isn't about "better writer." It's about better fit.

Final Verdict on KingEssays

From this test: the platform works without friction, writers are consistent in quality, no issues with deadlines or delivery, and the revision system actually functions. The main variable is not the platform — it's the writer you choose. KingEssays gives you stable quality. The experience depends on the communication style you prefer.

What This Test Proved

Homework tasks are handled reliably. Pricing is predictable and low-risk. Both fast and structured workflows are possible. The revision phase reveals the real difference. No hype. No failure cases. Just controlled comparison.

What You Don't See on the Surface

After the revision round, both orders were technically complete. If you stop the review here, the conclusion is simple: both writers are good, both delivered correct work, no issues with deadlines. But that's too shallow. The real value of this test is not the result — it's how predictable the process was.

Hidden Strength #1 — No Workflow Friction

Throughout the entire process, nothing broke: order placement was smooth, writer assignment was immediate, chat was stable with no delays or bugs, file delivery was clear and accessible, revision had no resistance. This is easy to ignore — but it matters. A writing service fails more often in process than in quality. Here, the process held.

Hidden Strength #2 — Price-to-Output Ratio

| Order A | Order B | |

|---|---|---|

| Price | $18.20 | $13.00 |

| Result | Correct + usable | Correct + more readable |

| Revision | Included | Included |

For this type of homework task: no upsells, no forced extras, no "pay to unlock quality" moments. That's a strong signal.

Hidden Weakness — Instruction Interpretation Gap

This is the only place where variation appeared. Same instruction: "step-by-step + short explanation." Two different outcomes: Jack produced minimal explanation, Joseph produced an expanded explanation. This is not an error. But it creates a dependency: you need to be specific if you care about depth. The platform doesn't enforce that. The writer interprets it.

Where KingEssays Actually Performs Well

Consistent baseline quality (no weak output), reliable deadlines (including urgent orders), working revision system (no pushback), clean communication infrastructure. There were no "failure points" in this test. That already puts it above many services.

Where the Risk Actually Is

Not in the platform. In selection.

| Risk Area | Reality |

|---|---|

| Quality | Stable |

| Deadlines | Reliable |

| Communication | Varies by writer |

| Output style | Depends on interpretation |

This is the real takeaway: KingEssays is not unpredictable — but writers are.

Who This Service Actually Works For

Students with clear instructions — you'll get exactly what you asked for. Urgent deadlines — the platform handles pressure well. Homework and technical tasks — reliable execution.

Who Might Struggle

Vague instructions — results depend heavily on writer interpretation. High explanation depth expectations (unstated) — may need revision.

Bottom Line

This test didn't produce a dramatic failure. And that's actually the point. KingEssays delivers stable, predictable results — the only real variable is how you communicate and which writer you choose. If you understand that, the platform becomes easy to use — and hard to mess up.

| Category | Score | Notes |

|---|---|---|

| Accuracy | 10/10 | No errors in either order |

| Speed | 9.5/10 | Strong even under 12h pressure |

| Communication | 8.5/10 | Depends on writer style |

| Revision system | 9/10 | Responsive, no friction |

| Value for money | 9.5/10 | Strong for homework-level tasks |

FAQ

Is KingEssays reliable for urgent deadlines?

Yes. In this test, a 12-hour homework order was completed ahead of time without any drop in accuracy. The platform handles time pressure well, but faster writers tend to communicate more briefly.

Can I use KingEssays for subjects like math or technical tasks?

Yes. The homework test showed that writers can handle structured, step-based problems correctly. The key difference is not accuracy, but how clearly the solution is explained.

Do I need to give very detailed instructions?

If you care about explanation depth — yes. Writers will complete the task correctly either way, but the level of detail depends on how specifically you define your expectations.

What actually affects the final result the most?

Not the platform — the writer you choose and how you communicate. The system is stable, but the output style varies depending on the writer's approach.

Is it better to choose a writer manually or let the system assign one?

Choosing manually is safer. This test showed clear differences in communication and explanation style, even between two high-rated writers.